February 2026

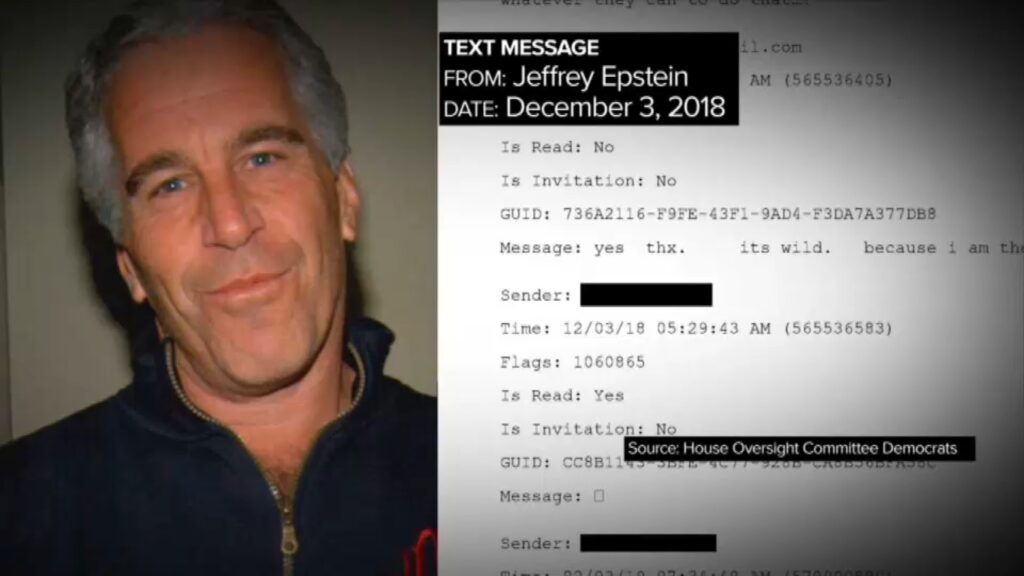

In early 2026, the re-release of documents related to the case of Jeffrey Epstein caused a strong media impulse. Reactions were global, but particularly intense in Western countries, as well as in the Western Balkans. The region already operates with a low threshold of trust in institutions. That’s why every shock acts as a high-priority signal in an overloaded system. The information space reacts like a network under a DDoS attack. Traffic is skyrocketing. Filtering weakens. Mistakes become more likely.

Formally, the documents are treated as archival material. In practice, modern technologies are changing the way such material is read, interpreted, and distributed. Generative artificial intelligence and deepfake tools make it possible to produce content that looks authentic, but has no demonstrable connection to reality. The boundary between evidence and simulation becomes porous. It is no longer just a political scandal. It is the operational environment in which algorithmic politics is conducted. Information is not published just to be known, but to produce an effect.

Systems from the spectrum of artificial intelligence tools today can synthesize voice, face, or handwriting with a high degree of fidelity. They can generate documents that pass a cursory forensic check. In regions with weak verification mechanisms, such material is given the status of fact before analysis is carried out. The Western Balkans is particularly sensitive. Social networks are the primary channel of political mobilization. Traditional media often react with a delay. A centralized regulator capable of real-time filtering practically does not exist. The result is the fragmentation of the information field.

Citizens receive data through personalized algorithmic filters. Those filters optimize engagement, not truth. Polarization intensifies as each user sees a version of reality compatible with their own views. Trust in institutions then functions as the system’s digital immunity. When it declines, society becomes susceptible to infection by misinformation. One false artifact can have more impact than ten confirmed facts.

The case of the Epstein documents shows how artificial intelligence is becoming an instrument of both foreign political competition and internal power struggles. Major powers are developing standards, ethical frameworks and technical protocols for identifying synthetic content. Small countries are late. Their institutional capacities resemble outdated operating systems without security patches. Each incident tests the state’s ability to preserve digital sovereignty. If the test fails, the consequences are not only reputational. They can be political, economic and security.

Social networking platforms function as algorithmic matrices optimized for emotional response. Virality is a function of excitement, not credibility. The Balkans are already burdened by historical traumas and deep political divisions. That is why such contents act as an impulse in a resonant circuit. A small initial energy can produce a powerful wave. A single video, authentic or synthetic, is enough to trigger protests or destabilize a political campaign.

Political organizations use this dynamic as a tool. Materials implying corruption, moral deviance or international conspiracies are distributed rapidly, without full verification. Even when the content is later shown to have been manipulated, the initial effect remains. The information system remembers the first record. The correction has a smaller range than the original. The authorities are trying to respond with legal measures and counter-narratives, but their effectiveness is limited. The problem is further complicated by the encryption of power – non-transparent decision-making centers that reduce the credibility of official denials.

Digital diplomacy, therefore, becomes an important instrument. States are balancing three goals: preserving stability, protecting reputation, and controlling the spread of unverified information. It is a complex task. Excessive control resembles censorship. Too little control looks like capitulation to information chaos. The sweet spot is narrow, like a security window in a cryptographic protocol.

The media response is not uniform. Part of the newsroom conducts an in-depth analysis of documents, checks sources, and consults experts. The second part focuses on sensationalism and viral potential. The combination of serious journalism and superficial reporting creates a heterogeneous ecosystem. Political actors must quickly distinguish authentic content from synthetic content. An error in judgment can have strategic consequences.

In the Western Balkans, the region functions as a testbed for new digital manipulation techniques. Parties, media, and activist networks are rapidly adopting tools that increase the reach of the message. The technology is cheap, accessible, and easy to use. The consequences can be serious. Any leak or compromising material can change the political dynamic within hours. In such an environment, digital sovereignty is not an abstract concept. It is a matter of political survival.

The security aspect goes beyond public opinion. Synthetic content can affect personal data, financial flows and the coordination of political actions. Attacks on information space are often a prelude to broader pressure operations. A state that does not have the capacity to analyze algorithmic flows loses situational awareness. Without it, crisis management becomes improvisation.

The Epstein files are therefore not just another scandal. They are an indicator of the transition to a new phase of political communication. Generative artificial intelligence and deepfake technology are changing the rules of the game. Truth is no longer just a matter of evidence. It becomes a question of verification capacities and trust in the system that carries out the verification.

For the countries of the Western Balkans, the key challenge is building technical resilience. This includes the development of forensic tools, regulatory frameworks and public education. It is also necessary to improve cooperation between the academic community and the technological sector. Without it, the political space remains open to manipulation.

Effective politics in the digital age is like a well-designed security system. It must have detection, prevention and response. It must anticipate scenarios, not just react to incidents. And it must maintain credibility, because without trust no defense works.

The analysis of this phenomenon, therefore, must not be superficial. It is necessary to combine an understanding of technology, mass psychology and geopolitical context. Information has become an operational resource, and control over its flow a key form of power. Societies that understand this can maintain stability. Those who do not understand become a training ground for other people’s experiments.

In an era when reality can be simulated, political security depends on the ability to distinguish signal from noise. It is a task that no longer belongs only to journalists or politicians. It is the task of the whole system. If the system fails, the consequences will not be virtual. They will be very real.

Author: Aleksandar Stanković